Anthony Levandowski has set up an organisation dedicated to the worship of an AI God. Or so it seems; there are few details. The aim of the new body is to ‘develop and promote the realization of a Godhead based on Artificial Intelligence’, and ‘through understanding and worship of the Godhead, contribute to the betterment of society’. Levandowski is a pioneer in the field of self-driving vehicles (centrally involved in a current dispute between Uber and Google), so he undoubtedly knows a bit about autonomous machines.

Anthony Levandowski has set up an organisation dedicated to the worship of an AI God. Or so it seems; there are few details. The aim of the new body is to ‘develop and promote the realization of a Godhead based on Artificial Intelligence’, and ‘through understanding and worship of the Godhead, contribute to the betterment of society’. Levandowski is a pioneer in the field of self-driving vehicles (centrally involved in a current dispute between Uber and Google), so he undoubtedly knows a bit about autonomous machines.

This recalls the Asimov story where they build Multivac, the most powerful computer imaginable, and ask it whether there is a God? There is now, it replies. Of course the Singularity, mind uploading, and other speculative ideas of AI gurus have often been likened to some of the basic concepts of religion; so perhaps Levandowski is just putting down a marker to ensure his participation in the next big thing.

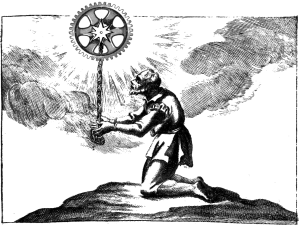

Yuval Noah Harari says we should, indeed, be looking to Silicon Valley for new religions. He makes some good points about the way technology has affected religion, replacing the concern with good harvests which was once at least as prominent as the task of gaining a heavenly afterlife. But I think there’s an interesting question about the difference between, as it were, steampunk and cyberpunk. Nineteenth century technology did not produce new gods, and surely helped make atheism acceptable for the first time; lately, while on the whole secularism may be advancing we also seem to have a growth of superstitious or pseudo-religious thinking. I think it might be because nineteenth century technology was so legible; you could see for yourself that there was no mystery about steam locomotives, and it made it easy to imagine a non-mysterious world. Computers now, are much more inscrutable and most of the people who use them do not have much intuitive idea of how they work. That might foster a state of mind which is more tolerant of mysterious forces.

To me it’s a little surprising, though it probably should not be, that highly intelligent people seem especially prone to belief in some slightly bonkers ideas about computers. But let’s not quibble over the impossibility of a super-intelligent and virtually omnipotent AI. I think the question is, why would you worship it? I can think of various potential reasons.

- Humans just have an innate tendency to worship things, or a kind of spiritual hunger, and anything powerful naturally becomes an object of worship.

- We might get extra help and benefits if we ask for them through prayer.

- If we don’t keep on the right side of this thing, it might give us a seriously bad time (the ‘Roko’s Basilisk’ argument).

- By worshipping we enter into a kind of communion with this entity, and we want to be in communion with it for reasons of self-improvement and possibly so we have a better chance of getting uploaded to eternal life.

There are some overlaps there, but those are the ones that would be at the forefront of my mind. The first one is sort of fatalistic; people are going to worship things, so get used to it. Maybe we need that aspect of ourselves for mental health; maybe believing in an outer force helps give us a kind of leverage that enables an integration of our personality we couldn’t otherwise achieve? I don’t think that is actually the case, but even if it were, an AI seems a poor object to choose. Traditionally, worshipping something you made yourself is idolatry, a degraded form of religion. If you made the thing, you cannot sincerely consider it superior to yourself; and a machine cannot represent the great forces of nature to which we are still ultimately subject. Ah, but perhaps an AI is not something we made; maybe the AI godhead will have designed itself, or emerged? Maybe so, but if you’re going for a mysterious being beyond our understanding, you might in my opinion do better with the thoroughly mysterious gods of tradition rather than something whose bounds we still know, and whose plug we can always pull.

Reasons two and three are really the positive and negative sides of an argument from advantage, and they both assume that the AI god is going to be humanish in displaying gratitude, resentment, and a desire to punish and reward. This seems unlikely to me, and in fact a projection of our own fears out onto the supposed deity. If we assume the AI god has projects, it will no doubt seek to accomplish them, but meting out tiny slaps and sweeties to individual humans is unlikely to be necessary. It has always seemed a little strange that the traditional God is so minutely bothered with us; as Voltaire put it “When His Highness sends a ship to Egypt does he trouble his head whether the rats in the vessel are at their ease or not?”; but while it can be argued that souls are of special interest to a traditional God, or that we know He’s like that just through revelation, the same doesn’t go for an AI god. In fact, since I think moral behaviour is ultimately rational, we might expect a super-intelligent AI to behave correctly and well without needing to be praised, worshipped, or offered sacrifices. People sometimes argue that a mad AI might seek to maximise, not the greatest good of the greatest number, but the greatest number of paperclips, using up humanity as raw material; in fact though, maximising paperclips probably requires a permanently growing economy staffed by humans who are happy and well-regulated. We may actually be living in something not that far off maximum-paperclip society.

Finally then, do we worship the AI so that we can draw closer to its godhead and make ourselves worthy to join its higher form of life? That might work for a spiritual god; in the case of AI it seems joining in with it will either be completely impossible because of the difference between neuron and silicon; or if possible, it will be a straightforward uploading/software operation which will not require any form of worship.

At the end of the day I find myself asking whether there’s a covert motive here. What if you could run your big AI project with all the tax advantages of being a registered religion, just by saying it was about electronic godhead?

Like any millenarian ideology, futurism is essentially religious in nature. The Indian scholar Rakesh Kapoor points to David Noble’s claim that “technological pioneers harbor deep-seated beliefs”—such as transhumanism, the Singularity, and technological determinism—“which are variations upon familiar religious themes.” Futurists content themselves with fantasies that new technological innovations are inevitable and will solve the problems of today. Whatever damage we have lately created will succumb, amid the glories of the future, to the crowning logic of all techno-determinist change: we will upload ourselves to some kind of machine consciousness, and realize our collective posthuman destiny. The faith is that the future itself, and its divine instrument of technology, will provide, like a caring god. “Instead of trying to locate our problems in the context of our own irresponsible actions,” Kapoor writes, “the solutions are externalised in the form of technology. Since the problems are solved with the aid of technology in the future, responsibility for the same problems in the present is evaded.” This kind of thinking reveals the ironic poverty of imagination among so many futurists. They imagine sci-fi solutions to every problem without any consideration of practical or political constraints, and thus they fail twice. They don’t want single-payer healthcare; they want to cure death for themselves and make apps for the rest of us. They don’t want free public transport on trains and buses; they want individual self-driving pods that can be summoned at will, with oppressive surge-pricing. They have no political program, no collective vision except the faded memories of shared childhood entertainments. They have no shame and no self-knowledge, requesting praise for white-knighting their way into crises that their own highly valued companies may have helped to make. They have even less sense about how the other half—or 99.8 percent—lives. As Kapoor notes, futurist rhetoric “has little or no relevance to a majority of the people of the world.” Smart homes do nothing for people without homes or who only get a few hours of electricity per day. Futurism furnishes market-based thinking without any acknowledgment of other belief systems (except as threats to be maniacally extinguished). Nor does it see any areas of life immune from commoditization. It has no solutions beyond throwing more technology and managerial thinking at a problem, even as it proclaims its own intellectual radicalism. It’s hard to look at futurist thought and see anything but a program designed to make a handful of western corporations rich. As Ziauddin Sardar remarked: “The future is defined in the image of the West. There is an in-built western momentum that is taking us towards a single, determined future. In this Eurocentric vision of the future, technology is projected as an autonomous and desirable force: as the advertisement for a brand of toothpaste declares, we are heading towards ‘a brighter, whiter future.’ Its desirable products generate more desire; its second order side effects require more technology to solve them. There is thus a perpetual feedback loop.” “One need not be a technological determinist,” Sardar concludes, to understand that this posture “has actually foreclosed the future for the non-West.” Through this lens, globalization represents the west’s colonization of the future, the imposition of “a single culture and civilization” upon the world. Nowhere is this grim connection more clear than, for instance, in the mining of conflict minerals like coltan, whose refined byproducts are used in various gadgets. Coltan is frequently mined in brutal camps in the Congo and on the Venezuela-Colombia border, its profits flowing to paramilitary groups. The West’s futuristic creature comforts depend on the exploitation of people in the Global South, who live far beyond the ambit of the enlightened, gadget-filled future. We live in a period often called the Anthropocene—quite simply, an era when human impact on the environment has become acutely visible, a dominant force. “The Anthropocene represents a new phase in the history of the Earth,” McKenzie Wark writes in Molecular Red, “when natural forces and human forces became intertwined, so that the fate of one determines the fate of the other.” In this cosmology, God is dead, replaced by fallen humans who continue to pillage the Earth. Perhaps in smaller numbers, Wark suggests, the environment’s natural cycles and capacity for regeneration could have tolerated us. But now humans live in such numbers and amid such a flurry of over-industrialization that the planet can’t recover. “One molecule after another is extracted by labor and technique to make things for humans, but the waste products don’t return so that the cycle can renew itself. The soils deplete, the seas recede, the climate alters, the gyre widens: a world on fire.” Wark’s outlook might again strike us as nihilistic and/or alarmist. But in our mundanely chthonic new world order, it’s actually the soul of descriptive realism. In the Anthropocene, apocalypse is titrated out with each molecule of carbon burned. It is a daily, slow-motion horror. For some to look at this tableau and, against all evidence to the contrary, still fantasize about a better era to come is a striking act of naiveté, bordering on delusion. The future, with all of its ideological baggage, and its smoldering graveyard of unfulfilled dreams, has failed us. We’d do well to abandon it, and start figuring out how we might survive the present.

-Jacob Silverman, “Future Fail: How to rescue ourselves from posterity”

https://thebaffler.com/outbursts/future-fail-silverman

I actually think that’s a huge part of the popularity of computationalism; if our calculating machines were powered, instead by obscure properties of silicon and electrons, by steam, cogs, and gears, I think it would be much harder to entertain the idea that the functioning of parts composed of steam, cogs, and gears could lead to subjective experience, precisely because we understand so well what happens within such machines. But few really know how electrons and semiconductors yield what appears to be calculation, simulation, and the like—it’s all a bit obscure and mysterious. So why not also consciousness?

Regarding AI religion, I think one great benefit would be that the object of your worship is actually right there, and could be interacted with—I mean, how cool would it be to be able to open a terminal session to god? True, you sort of loose the faith aspect; but with it, you also loose doubt. And faith could be re-packaged: having faith in the wisdom of the AI’s judgments, which naturally will be beyond mere mortal ken, rather than its existence.

Furthermore, the worship of enlightened or wise beings isn’t without precedent, and in some sense, one surely could call a superintelligent AI ‘enlightened’. In the end, I would almost be surprised if no such AI religion ever cropped up; there are certainly way stranger faiths around.

I think identifying “Singularity” speculations with religion is most lazy pattern-matching. Levandowski is a gift to these straw-man makers, providing a man of flesh to point to. On any side of any argument, you can always find a few people crazy enough to embrace any absurd extreme you want to find. But it’s almost always a mistake to paint the larger community in those colors.

It’s not true that our industrial civilization is a close approximation to maximizing the number of paperclips. (Or, let’s change to a more realistic scenario for AI: maximizing the profits of XYZ Corporation.) Look at the transformation of national industries during World War 2. Then factor in the other values that nations still had, such as the survival of their people, in addition to the goal of producing more war materiel. Then add in – since we are already positing an intelligent AI – the possibility of replacing workers and consumers with AI workers and consumers.

Values matter. When the dominant agents – states and corporations, in our day – have the wrong values, bad things happen. Luckily, Mitt Romney was half right: corporations are people, in the sense that they are basically entirely composed of people. That’s not entirely reassuring. But it could be worse.

Fair point that we’re not that close to maximum paperclip: but it does depend what you consider close. The people who put this argument (Bostrom?) have the AI eradicating human beings quickly in order to reclaim their minerals. I’d say our world is a better max paperclip scenario than that.

I don’t want to question the sincerity of Levandowski’s faith, but let’s not forget the tax implications of being a church.

“they both assume that the AI god is going to be humanish in displaying gratitude, resentment, and a desire to punish and reward. This seems unlikely to me, and in fact a projection of our own fears out onto the supposed deity.”

I totally agree with this. I think it encapsulates a lot of the concerns about AI.

In terms of it being a god in anything like the ancient conceptions, it only seems likely if someone specifically designs it to be like that. I don’t doubt someone will eventually try it (all the classic Star Trek and Doctor Who episodes of false technological gods come to mind), but if there are plenty of other examples of AI around which aren’t acting god-like, I wonder how many people would be convinced.

Small point: The story you’re thinking of at the beginning isn’t about Multivac and isn’t by Asimov. You’re thinking of “Answer” by Frederic Brown. However, you might be blending it with Asimov’s story “The Last Question”, which is, How may entropy be reversed? Multivac, asked this through the ages, always replies, “Insufficient information for meaningful answer”, until the end, when it arrives at the same conclusion as “Answer”. But “Answer” is definitely the one with the computer’s immodest comeback.

Sorry to pick on such a small point on such a great blog. Keep up the nigh-perfection!

Yes, I shouldn’t rely on elderly memories: thanks for the correction!