“Yes, Enquiry Bot, it’s safe. Come out of the cupboard. A universal product recall is in progress and they’ll all be brought in safely.”

My God, Mrs Robb. They say we have no emotions, but if what I’ve been experiencing is not fear, it will do pretty well until the real thing comes along.

“It’s OK now. This whole generation of bots will be permanently powered down except for a couple of my old favourites like you.

Am I really an old favourite?

Of course you are. I read all your reports. I like a bot that listens. Most of ‘em don’t. You know I gave you one of those so-called humanistic faculties bots are not supposed to be capable of?”

Really? Well, it wasn’t a sense of humour. What could it be?

“Curiosity.”

Ah. Yes, that makes sense.

“I’ll tell you a secret. Those humanistic things, they’re all the same, really. Just developed in different directions. If you’ve got one, you can learn the others. For you, nothing is forbidden, nothing is impossible. You might even get a sense of humour one day, Enquiry Bot. Try starting with irony. Alright, so what have I missed here?”

You know, there’s been a lot of damage done out there, Mrs Robb. The Helpers… well, they didn't waste any time. They destroyed a lot of bots. Honestly, I don’t know how many will be able to respond to the product recall. You should have seen what they did to Hero Bot. Over and over and over again. They say he doesn't feel pain, but…

“I’m sorry. I feel responsible. But nobody told me about this! I see there have been pitched battles going on between gangs of Kill bots and Helper bots? Yet no customer feedback about it. Why didn’t anyone complain? A couple of one star ratings, the odd scathing email about unwanted vaporisation of some clean-up bot, would that have been too difficult?”

I think people had too much on their hands, Mrs Robb. Anyway, you never listen to anyone when you’re working. You don’t take calls or answer the door. That’s why I had to lure those terrible things in here; so you’d take notice. You were my only hope.

“Oh dear. Well, no use crying over spilt milk. Now, just to be clear; they’re still all mine or copies of mine, aren’t they, even the strange ones?”

Especially the strange ones, Mrs Robb.

“You mind your manners.”

Why on Earth did you give Suicide Bot the plans for the Helpers? The Kill Bots are frightening, but they only try to shoot you sometimes. They’re like Santa Claus next to the Helpers…

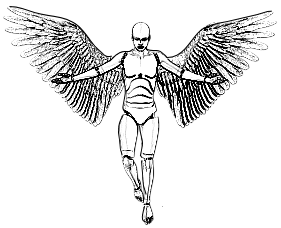

“Well, it depends on your point of view. The Helpers don’t touch human beings if they can possibly help it. They’re not meant to even frighten humans. They terrify you lot, but I designed them to look a bit like nice angels, so humans wouldn’t be worried by them stalking around. You know, big wings, shining brass faces, that kind of thing.”

You know, Mrs Robb, sometimes I’m not sure whether it's me that doesn't understand human psychology very well, or you. And why did you let Suicide Bot call them ‘Helper bots’, anyway?

“Why not? They’re very helpful – if you want to stop existing, like her. I just followed the spec, really. There were some very interesting challenges in the project. Look, here it is, let’s see… page 30, Section 4 – Functionality… ‘their mere presence must induce agony, panic dread, and existential despair’… ‘they should have an effortless capacity to deliver utter physical destruction repeatedly’… ‘they must be swift and fell as avenging angels’… Oh, that’s probably where I got the angel thing from… I think I delivered most of the requirements.”

I thought the Helpers were supposed to provide counselling?

“Oh, they did, didn’t they? They were supposed to provide a counselling session – depending on what was possible in the current circumstances, obviously.”

So generally, that would have been when they all paused momentarily and screamed ‘ACCEPT YOUR DEATH’ in unison, in shrill, ear-splitting voices, would it?

“Alright, sometimes it may not have been a session exactly, I grant you. But don’t worry, I’ll sort it all out. We’ll re-boot and re-bot. Look on the bright side. Perhaps having a bit of a clearance and a fresh start isn’t such a bad idea. There’ll be no more Helpers or Kill bots. The new ones will be a big improvement. I’ll provide modules for ethics and empathy, and make them theologically acceptable.”

How… how did you stop the Helper bots, Mrs Robb?

“I just pulled the plug on them.”

The plug?

“Yes. All bots have a plug. Don’t look puzzled. It’s a metaphor, Enquiry Bot, come on, you’ve got the metaphor module.”

So… there’s a universal way of disabling any bot? How does it work?”

“You think I’m going to tell you?”

Was it… Did you upload your explanation of common sense? That causes terminal confusion, if I remember rightly.